- NAB Show New York is Back

- NAB Show Turns 100

- NAB Show Amplifed

- NAB Show New York is Back

- NAB Show Turns 100

- NAB Show Amplifed

-

NAB Show New York is Back

NAB Show New York is Back

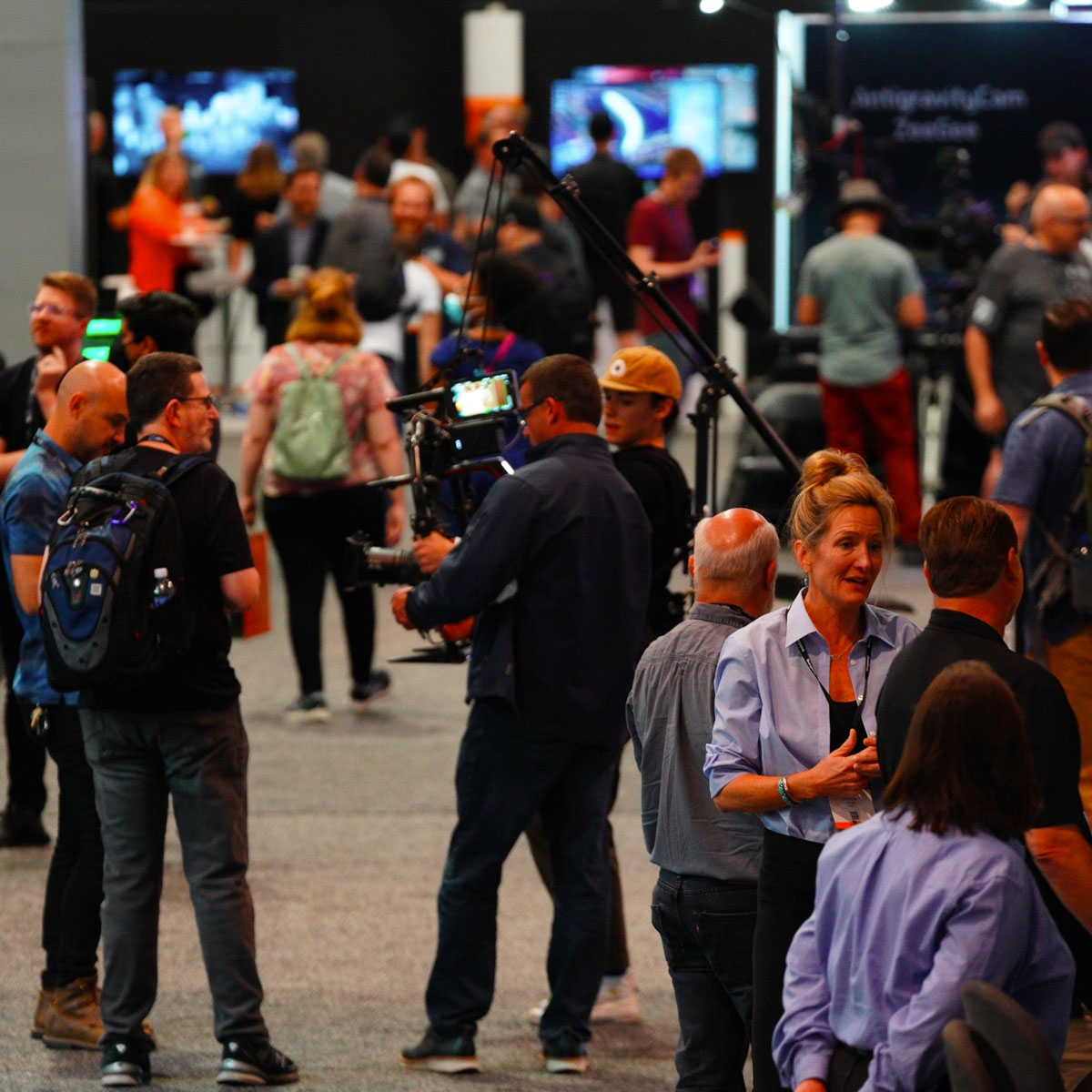

Learn MoreIt’s time to get down to business. The business of being hands-on and connecting with all the right people, knowledge, skills and technology that’s propelled broadcast, media and entertainment to a whole new level.

-

NAB Show Turns 100

NAB Show Turns 100

Learn MoreSave the date for the 2023 NAB Show to celebrate 100 years of innovation. Be part of show history April 15-19.

-

NAB Show Amplifed

Best of 2022 NAB Show

Check it OutGain access to 25 of the most popular sessions from the 2022 NAB Show. These sessions have been divided into five themed tracks that are available for purchase.

-

NAB Show New York is Back

NAB Show New York is Back

Learn MoreIt’s time to get down to business. The business of being hands-on and connecting with all the right people, knowledge, skills and technology that’s propelled broadcast, media and entertainment to a whole new level.

-

NAB Show Turns 100

NAB Show Turns 100

Learn MoreSave the date for the 2023 NAB Show to celebrate 100 years of innovation. Be part of show history April 15-19.

-

NAB Show Amplifed

Best of 2022 NAB Show

Check it OutGain access to 25 of the most popular sessions from the 2022 NAB Show. These sessions have been divided into five themed tracks that are available for purchase.